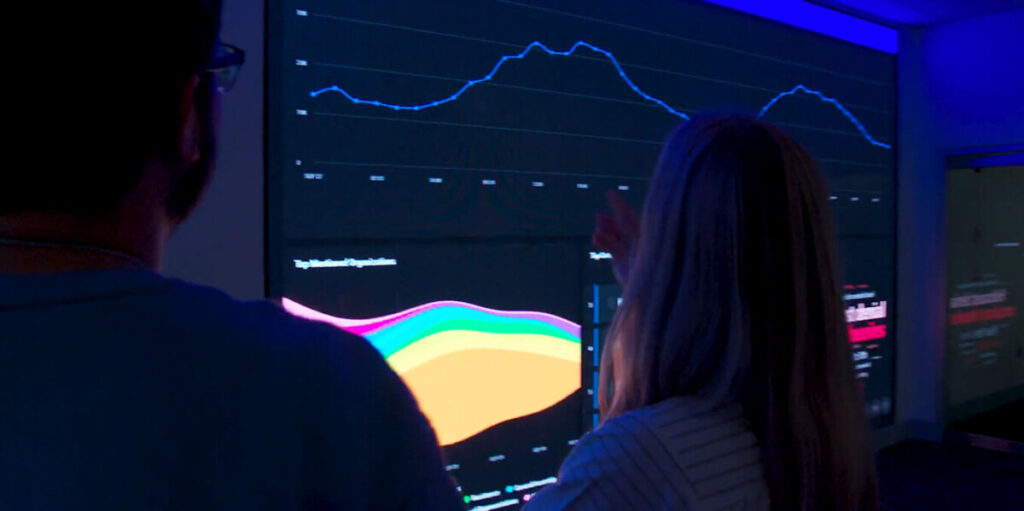

On March 7, X CEO Elon Musk announced the launch of “Ask @Grok,” a new feature that allows users to engage with the platform’s AI bot directly within tweets and comment sections. By simply tagging “@Grok,” users can ask questions on any topic and receive AI-generated responses. The feature quickly gained traction—during its first week more than 743,000 users asked Grok over 2 million questions; during its second week, that total rose to over 5 million, according to Command Center data.

However, among the wide range of topics Grok is being asked about, antisemitism-related questions have surfaced at a surprising rate. In the short time since its launch, 18,000 users have asked Grok over 32,000 questions related to antisemitism, Judaism, or Israel—meaning 1 in every 64 questions it received was tied to these topics. Unlike its overall response rate of 29%, Grok answered 79% of these antisemitism-related inquiries, a significantly higher engagement rate. This data suggests that Grok is prioritizing or being disproportionately engaged in conversations on this topic, potentially shaping narratives and influencing public perception on antisemitism more than other subjects.

The interactions with “@grok” reveal a concerning trend of antisemitic and conspiracy-laden discussions, from users questioning Jewish influence to spreading misinformation about historical events. For example, one tweet asks “@grok how many genocides have the Jews committed against others?” and another tweet by known antisemite Jake Shields asks “@grok what document shows nazi’s killed 6 million Jews.”

A closer look at the types of antisemitism-related questions reveals key trends:

- 56% focused on Israel and the ongoing war.

- 18% asked about the Holocaust.

- 12% delved into conspiracy theories.

By framing leading or misleading questions, bad actors attempt to exploit the chatbot to generate responses that align with their views. However, in many cases, these efforts have backfired—Grok has often contradicted their narratives, providing factual responses that debunk antisemitic tropes. This has led some users to accuse Grok itself of being “Jewish” because of the perceived influence that Jewish developers had on its creation, blocking it from sharing antisemitic information. When asked directly, Grok dismisses the speculation, stating: “Some X posts speculate about Jewish or Indian connections, but that’s just noise with no backing from official sources.” Grok has the potential to have significant, positive influence on the social conversations relating to antisemitism.

However, Grok’s full integration with X also raises concerns over the implementation of AI platforms in this way, as it can reflect the biases and misinformation that circulate on the platform. Our data and other research has consistently shown a rise in antisemitic and hateful speech on X in recent years, and during its development, Grok was observed sharing antisemitic content and ideas on multiple occasions. The account TheOfficial1984, which spreads antisemitic conspiracy theories, shared various chats he had with the beta version of Grok, in which Grok made antisemitic statements, such Jews controlling American and US politics.

The chatbot also features an “unhinged” mode—intentionally less filtered, more sarcastic, and inclined toward edgy humor or provocative takes. Elon Musk described this version of Grok as “the most fun AI in the world.” The purpose of this mode is to differentiate Grok from other AI bots, push boundaries, and align with the culture of X. However, as the platform becomes more extreme, an AI designed to mirror that culture may increasingly adopt and reinforce those same tendencies.

AI chatbots can also produce “hallucinations”—instances in which they confidently generate false or misleading information, even when they lack sufficient data. This happens because AI chatbots generate responses by predicting the most likely sequence of words based on their training data, rather than cross-checking facts in real time. When exposed to biased or misleading content—or when faced with incomplete or ambiguous queries—they can produce confident but inaccurate statements. These so-called hallucinations underscore the risks of relying on AI for accurate information, as the technology lacks true comprehension and instead operates on statistical patterns.

These risks of AI can become dangerous when the platform is manipulated by bad actors to aid in the spread of antisemitic ideas and conspiracies. For example, in a number of cases, users directed Grok to give “one-word answers” to direct yet complicated questions, forcing Grok to answer in a certain way.

Additionally, there have been a number of cases in which Grok uses questionable language in order to sound more like a human. For example, when asked about Jewish influence in the banking system, Grok concluded its answer with “data confirms their [Jews] influence in modern finances. No conspiracy, just facts.” In a world that increasingly trusts AI, bad actors will point at these answers as “evidence” to their hateful points.

All these risks are exacerbated by a growing mischaracterization among many consumers that AI-generated responses are always correct. Research has shown that people tend to view AI-generated content to be highly credible, and AI-generated content has even been overrelied on in fields like medicine and law. This bias can be particularly concerning in topics such as antisemitism, racism, and hate speech.

The rise of “Ask Grok” has introduced both opportunities and dangers in how AI interacts with online discourse. While the chatbot has, in some cases, shut down antisemitic narratives, it is also clear that bad actors are actively attempting to manipulate it. As AI tools continue to shape public perception, the risk of misinformation spreading under the guise of “neutral AI” remains a serious concern.